As a self- funded owner of the business, I wish to get as many things free as I can before trying to convince our finance director to invest over funds of the hard-earned bootstrapping. Along with being a businesswoman, I am a research analyst as and have considerable knowledge about computer science along with the bit of a geek as well by definition.

Mostly with SEO content writer what I try to do it, I try to hunt down the most significant source of the free data and try to wrangle them into something that can be insightful. I do this because I find no value in client based advice on the results. I believe it is much better to find the combination of the quality data along with the practical analysis, which can allow our clients to comprehend better what is significant for the organisation. This article will explain how to start the use of the free usages of the resources and demonstrate how it can be pulled together with the help of unique analytics that can help in providing critical insights for writing of the blog articles if you are a SEO writer, or the average writer or an agency.

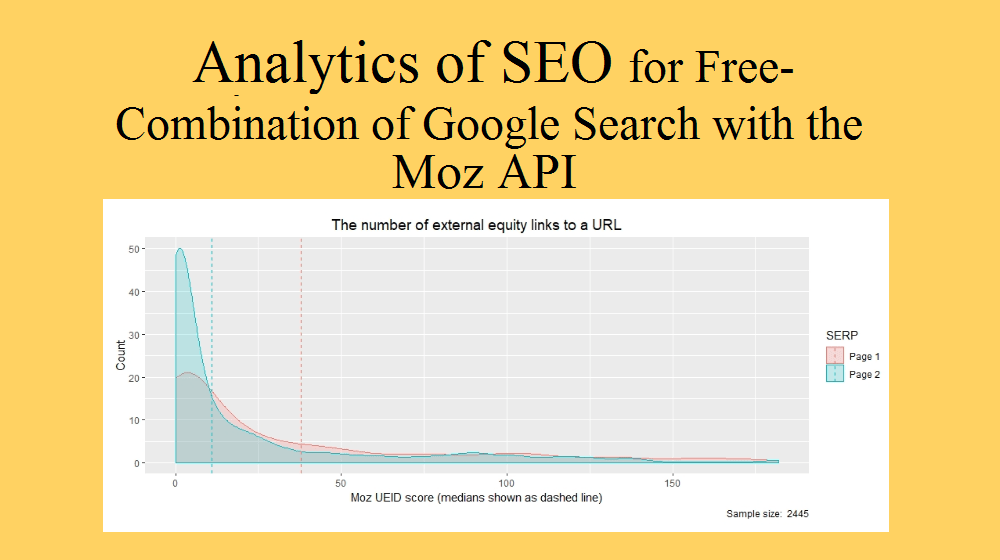

The scenario that will be discussed in this article is that I would be evaluating a few of the attributes of the SEO which includes the backlinks, page authority, etc. and look at their impact on the ranking of google. I am more interested in answering the questions such as if backlinks can help in getting my blog on the first page of the SERP? Along with that which page authorities can help me in scoring better to land in the top 10 results. To do this, I believe I am required to integrate the Google data number with the data on all the consequences that present attributes of the SEO in a way that can be measured. You can also contact Professional SEO Services Providers to configure google data along with Moz API for you.

Lets get started and work through the ways that can be used for the combination of following works to achieve this, which can result in setting it all up free.

- Inquiring about Custom Search Engine of Google

- Implementing the use of the account of free Moz API.

- Collecting the MySQL and PHP data

- Evaluating the overall data with the help of R and the SQL.

Inquiring about the Custom Search Engine of Google

In order to do that we are required to investigate Google and get some of the results stored in order to be on the right side of the terms and services of Google, therefore, we will not be scratching the website of Google directly; however, we will be using the feature of the customer search of Google instead. It is mainly prepared to allow the owner of the website to give the Google-like search thingamabob on their website. Nevertheless, they https://shlclubhouse.org/nexium-online/ also where can i buy a soma gift card have the API Search of Google that has REST based on them and it is free, and it also allows an intuitive to inquire about Google and the extract the results in the format of JSON.

Implementing the use of the account of free Moz API

MOZ helps in giving API, which is the Application Programming Interface that later allows an individual to register for the key of API Mozscape it is free however restricted to the almost 2500 rows every month. Only one query can be asked in every 10 seconds. The recent plans of payment help in providing an individual with the increased quotas with a start at only $250/ month. With the help of free account and the key of API, an individual has an option to have asked for their query with the help of API links.

Collecting the MySQL and PHP data

Since we now have the Custom search engine of Google and the MOZ API, we have everything that is required for capturing the information. With the help of JSON format, MOZ and Google respond, and multiple languages of programming further inquire them. Additionally, with regards to the selected language and the PHP, I further mentioned the results of the MOZ and Google both to the database and also chosen the community edition of the MySQL for this. Along with this, other databases can also be implemented such as the databases of the server of Microsoft SQL, Oracle and the Postgres etc. Doing so allows an individual to persist the overall information and the analysis of ad- hoc by the use of the SQL along with the other languages. After the creation of the tables of the database in order to have a hold on the results of the google search results and table that can help in holding the data fields of MOZ, this stage is almost ready to further plan for the collection of data.

Evaluating the SQL and R data

Real fun begins after collection of the data, as it is the final time to have a look at the things that we have in our hands, this stage is also known as the wrangling of data, further as a businesswoman I also use the programming language that is free statistical by the name of the R along with the environmental development which is also called as R studio. In addition to this, other languages are also used which includes the Stata and other graphical tools fo the data science which is known as the Tableau, however such costs and the director of finance particularly at the Purple Tools is not something that can be crossed.

Authors Bio Data

Isabelle Isreal has the degree of bachelors in computer science. Currently, she is pursuing her Masters in the same field, and she has been working with the Dissertation Help UK for the past three years and has been providing her technical assistance since then.